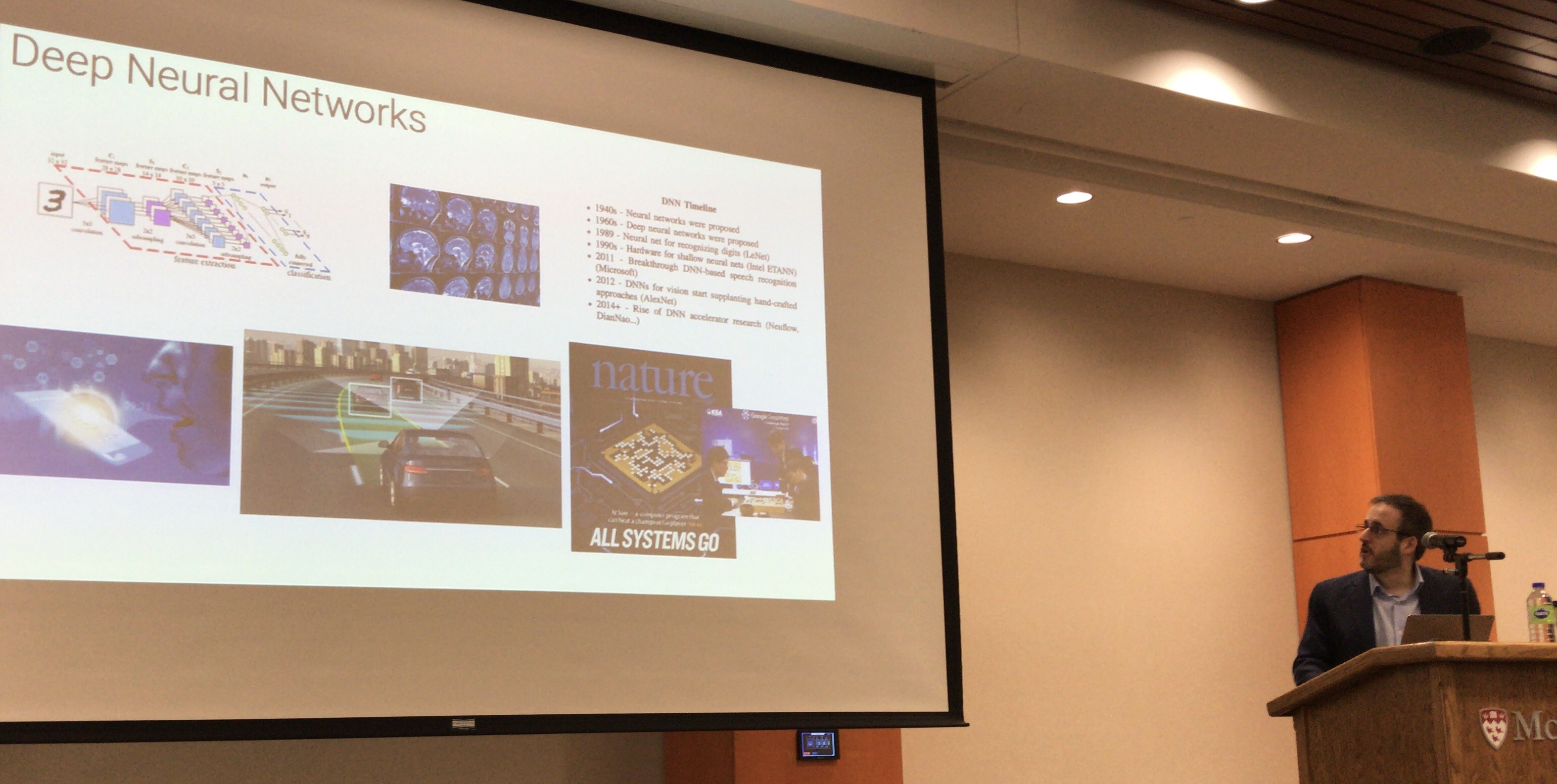

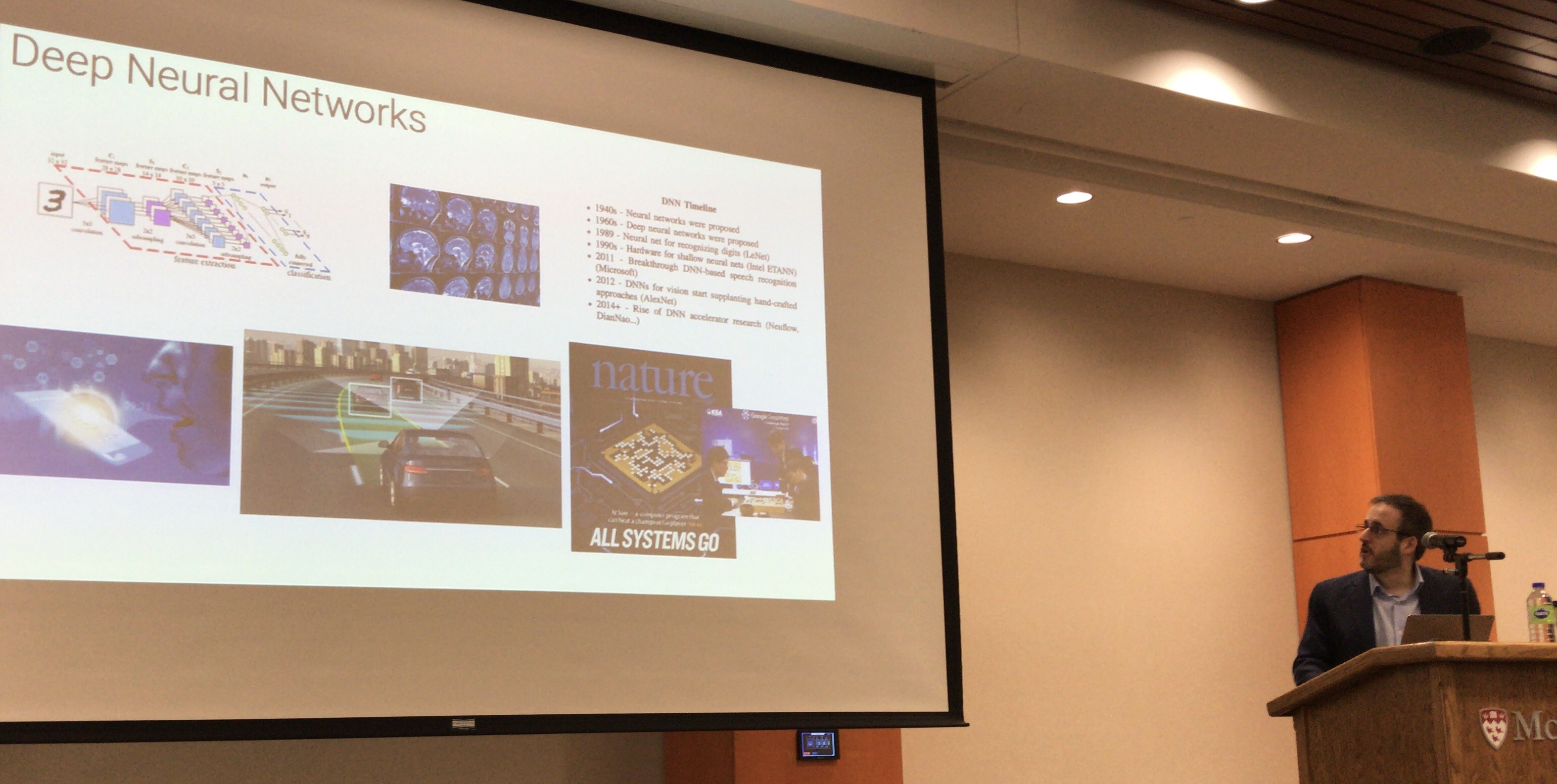

Profs. Brett H. Meyer and Warren Gross presented a tutorial on the optimization of hardare and software for deep learning at the 28th Internation IEEE Conference on Electrical Performance of Electronic Packaging and Systems (EPEPS) 2019 in Montreal today. First, Gross introduced machine learning in general, and deep learning in particular, from a computational perspective. He then summarized recent work on model complexity reduction, and presented research on custom architectures for DNN acceleration.

Meyer followed up with an introduction to multi-objective hyperparameter optimization. He motivated the need for automation in deep learning software design, and presented a variety of computer vision results from their tool, OPAL: Ordinary People Accelerating Learning. He then discussed various challenges when deploying deep learning to low-cost IoT systems, and presented early results from work with natural language processing algorithms targeting such devices.

IEEE EPEPS 2019 Conference Program

Machine learning (ML) is a key technology, with wide and growing application. Every layer in the computer system design stack has been touched by machine learning: from ML-enabled applications, to ML accelerators in hardware, to ML-enabled design tools, learning algorithms are leaving an indelible mark on computing. This tutorial, presented by Professors Warren J. Gross and Brett H. Meyer from the Department of Electrical and Computer Engineering at McGill University, will provide an introduction to machine learning and ML algorithm optimization. Professor Gross will begin by introducing the basics of neural networks and deep learning. He will then describe methodologies for the design of deep-learning accelerators and will give an overview of the main techniques used to efficiently map the computations used in deep learning to hardware. He will then describe recent work in custom hardware accelerators for deep learning.

Professor Meyer will continue by introducing how machine learning algorithms, and in particular, deep learning, can be optimized for more efficient execution. He’ll discuss the typical constraints of hardware running ML applications, and the related metrics used for optimization. He will then revisit deep learning hyperparameters, introduce hyperparameter optimization, and present recent results optimizing computer vision and natural language processing tasks.

Download Part 1

Download Part 2